All New: Evaluations for RAG & Chain applications

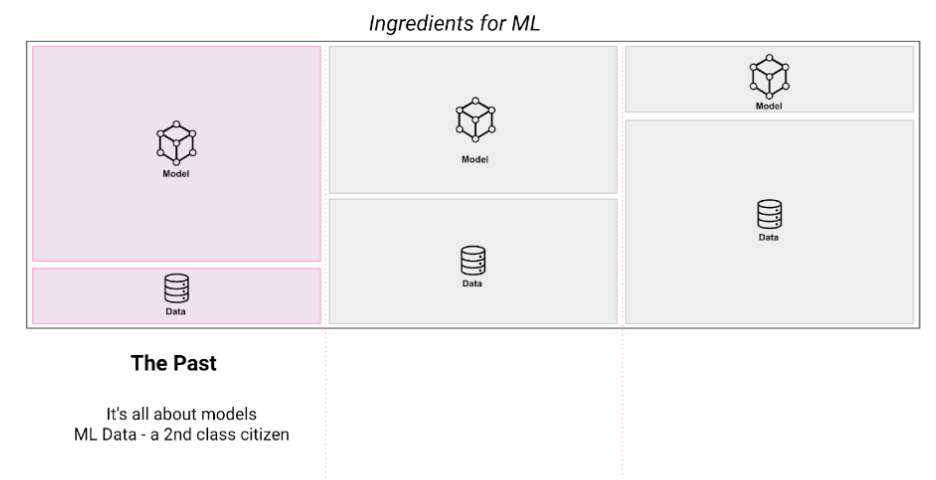

“ML Data” : The past, present and future

Over the past decade, I've had the privilege to have been part of teams building the foundational technology behind some of the biggest Machine Learning platforms – as an early engineer building the foundations of Siri at Apple, then building and scaling the world’s first Feature Store, then building data quality systems for one of the largest ML Platforms on the planet at Uber (learning: shine a light on your data; you will be shocked by how bad it is!). I recently wrote a paper with the fine folks at the Stanford AI Lab to evangelize the concept of Embeddings Stores (unstructured data ML adoption is exploding!), and I am now actively building the first ML Data Intelligence platform at Galileo (more on this below!).

Through these experiences, I’ve seen an evolution in how we think about data for Machine Learning. I wanted to share my thoughts on how the criticality of ML data has evolved and why I think organizations that obsess on the quality of their ML Data will quickly and vastly outperform those that focus on the model alone.

Let’s dive into this, but like everything else, it’s easier when we first step back and peer into how we got here.

The Past: Commoditization of storage and compute, and the rise of ML Platforms

By the time the 2010s hit, Data Engineers had access to immense data and batch compute resources at their disposal—with Hadoop, MapReduce and eventually Spark -- leading to the Big data revolution. During this time, analytics systems became the prime consumers of vast amounts of data as it became critical for organizations executing on data-driven insights.

Large compute platforms evolved to have SQL-like interfaces as well as programmatic SDKs on top of the frameworks which would allow a user to do complex transformations on gigabytes of data. At Apple, for example, we wrote dozens of batch analytics jobs that would run daily and collate reports on usage of Siri across millions of Apple devices around the globe and generate reports.

An outcome of this was the Data Sprawl problem - where proliferation of ad-hoc jobs without a layer of proper data management led to large amounts of duplication in compute, data redundancy and a general disarray in how data processing was organized. The problem became rampant at scale, leading to data warehouses turning into data landfills.

In recent years, similar problems manifested themselves in ML platforms where ad-hoc data generation jobs built from batch and streaming sources created a messy ML data ecosystem. Choosing the right, error-free, representative data for your ML task became equivalent to finding a needle in a haystack.

Parallelly during this time, advancements were made in Machine Learning techniques, and frameworks like TensorFlow became popular, exposing easy-to-use SDKs for developers to build complex NeuralNets and tune hyperparameters easily. This advancement was further accentuated with Pytorch, which facilitated the low-code creation of Deep Learning models.

Similar advancements were made in classical Machine Learning techniques. Standard Decision Tree based techniques were replaced by Gradient Boosted Trees, which significantly improved the efficacy of their older counterparts.

Around this time, the world of ML collided with Big data and the problems previously encountered in non-ML big data systems manifested themselves in Machine Learning systems. My team at Uber, for example, was one of the first to publicly evangelize potential solutions to this problem when we created and evangelized a large-scale ML Platform, Michelangelo, that served all Data Scientists at Uber to train and deploy models.

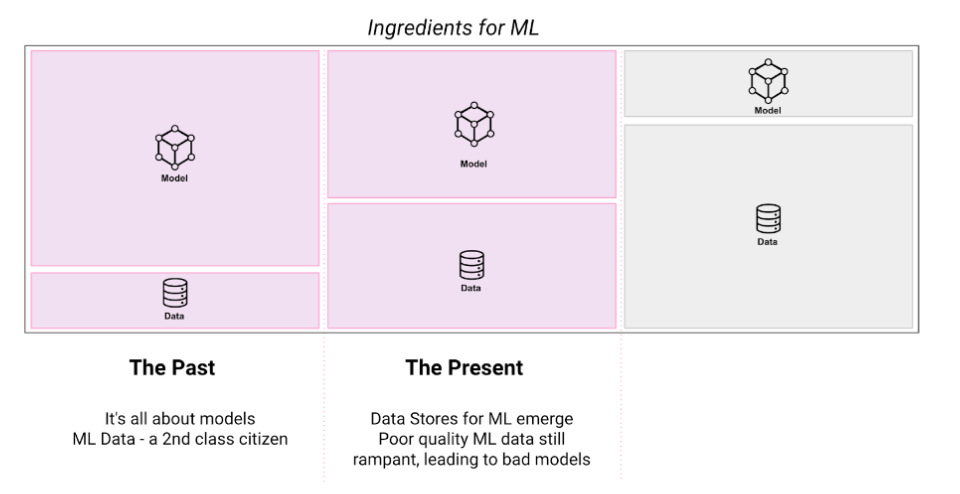

The Present: “Data powers ML. How to tame the beast?” The rise of ‘ML data’ stores

The Data Sprawl issue in ML became such a big pain point that it led to the next phase of advancements in ML platforms, centered around managing the lifecycle of the data that models consumed across training, evaluation and inference, leading to the rise to 'ML Data stores'.

As ML platforms (e.g. C3, Sagemaker, DataRobot etc) grew into becoming one-stop shops for larger organizations for managing all their ML models, the simultaneous training and deployment jobs of multiple models combined with a general lack of management of the data these models consumed, led to massive data bottlenecks. This led to the need for robust ML data management solutions that could streamline the easy consumption of data across multiple models without duplicating the compute and storage, reducing data fan-out issues as well as operational costs.

The solution to these key challenges came in the form of Feature Stores for structured and semi structured data, as well as unstructured data (embeddings), which massively simplified the authoring and consumption of ML features across the different stages of the ML workflow.

The past few years have seen a proliferation of Feature Store technologies being a part of various popular ML Platforms (Google Cloud Vertex AI, Amazon Sagemaker, Databricks), but also a vast number of mid-sized firms focused on building such ML Data stores as stand-alone services.

With the advent of Transformers and unstructured data machine learning taking off, we will see these ML data management and storage technologies expand to house pre-trained embeddings in one place—large organizations such as Google and Uber, have had teams managing re-usable embedding stores for a while. With unstructured ML data proliferating within businesses, these technologies are soon to be commoditized.

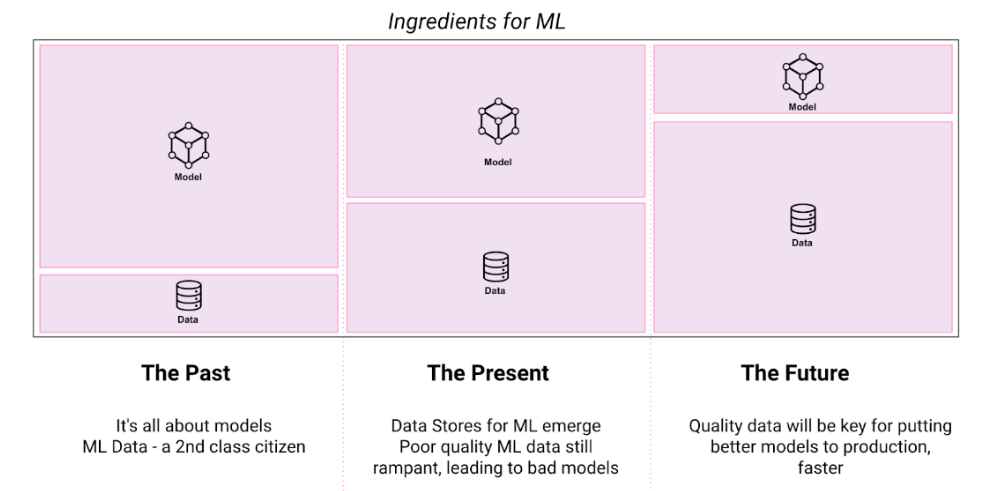

The Future: “lesser, high quality data strongly preferred over more, poor quality data” – The rise of ‘ML data intelligence’

To recap, three key advancements have chartered the MLOps revolution over the last few years - better management of ML data, the commoditization of off-the-shelf pre-trained models, and the advent of powerful ML frameworks making model development a breeze.

Despite these advancements, the quality of ML systems still suffers from 3 critical challenges

- It's very hard to determine the right data to label and train models on from the vast amounts of data available.

- It takes several weeks of rigorous data debugging to analyze and understand how the model is training on the data and course-correct by taking action on the data pulling the model down.

- It's difficult to catch the signals to retrain due to the different forms of drift occurring in production traffic and taking action on it.

The core of the aforementioned problems fundamentally rests in the fact that there is little attention paid to the quality and relevance of the ML data being used to train and assess these models, which has been a key learning after my many years of developing ML platforms.

The criticality of observing what data a model is interacting with at different stages of its life-cycle is a major differentiating factor between productionizing high-quality models that can be trusted. A lack of this practice leads to ML being perceived as a blackbox, which in the long run can bring down the quality of downstream applications that consume their outputs.

At Uber, we built advanced observability tooling which bolstered thousands of ML and Feature pipelines running every day that:

- Auto-detected different categories of errors, filtered, fixed and augmented data that pulled the model's performance down.

- Automatically failed pipelines that did not meet at a certain quality ba.Designed methods using information theory to auto-select the right features for models thereby weeding out bad quality data.

This resulted in significant improvements across thousands of models running in Uber's production environment. The saliency of downstream applications consuming model outputs improved, thereby driving up key business metrics across different product verticals.

Today, the growing rate of adoption of AI in the rest of the industry seems to be pointing to the same trend we've seen at larger, more technologically advanced companies. The rapid adoption of ML Platforms will lead to a significant increase in the ML footprint across more products. But the more the number of models being productionized, the larger the need for ensuring that models get trained and evaluated on high-quality data. And this will only gain more significance in the coming years.

This is why the next challenge for ML practitioners to solve will primarily be centering around two processes:

- Automating the derivation of key insights from the data and its interaction with the models

- Fixing the data to ensure high-quality models across the workflow - whether you are formulating and designing your ML use case, deciding what data and labels to use, what data to label, debugging latent errors and biases in datasets, or monitoring drift, training-inference skew and the new clusters of data the model receives from the world and quickly taking action to fix them.

For businesses that want to put artificial intelligence (AI) first, ML Data Intelligence is the means through which this may be accomplished.

Working with Natural Language Processing?

Read about Galileo’s NLP Studio